The ongoing expansion and globalization of large business and public organizations simultaneously support and are supported by information and communication technology. There is a broad consensus about this mutually reinforcing relationship, but there is hardly an agreement about how this process unfolds dynamically. Developing and using the information infrastructures these organizations require, have traditionally been regarded as a predominantly technical endeavor (Gunton, 1989). This is no longer the case, as a rapidly expanding body of literature addressing an array of issues of social, economical, institutional, political and strategic nature demonstrates (Kahin and Abbate, 1995). Most relevant to us is the subset of this literature focusing on how the development and use of information infrastructure is interwoven with social and strategic issues in business organizations and in developing countries. This body of work explores a number of issues including: the heterogeneity of information systems due to different local needs (Davenport, 1998), the inscription of interests into artifacts (Bloomfield et al., 1997; Sahay, 1998), managerial approaches (Weill and Broadbent, 1998), local resistance to top-down initiatives (Ciborra, 1994), how basic design assumptions get taken for granted (Bowker and Star, 1999), organizational politics (Ciborra, 2000), and how business strategies get worked out (Currie and Galliers, 1999).

We aim at exploring one aspect of this problem complex, namely the negotiations around striking a balance between the need for such information infrastructures to adapt to the various local contexts they are to operate across, while simultaneously coping with this complexity by leaning towards universal solutions. The way universal solutions, predominantly from the developed countries and economies, need, but notoriously fail, to be negotiated against the needs of developing countries illustrates the problem (Braa, Monteiro and Reinert, 1995; Hanna, Guy and Arnold, 1995; Lind, 1991; Ryckeghem, 1996; Sahay, 1998; Sahay and Walsham, 1997). In essence, this amounts to exploring the tensions arising from two different strands of reasoning. The former, closely aligned with readily recognizable concerns for curbing complexity, reducing risk and maintaining control, is the argument that the only viable way to establish a global information infrastructure is to adhere to uniform, standardized solutions (Weill and Broadbent, 1998). The latter, by now well iterated and largely internalized, argues for the necessity of adapting information systems to local, situated and contextual work settings (Ciborra, 1994; Kyng and Mathiassen, 1997; Suchman, 1987).

Beyond the intellectually stimulating, but not necessarily equally relevant, exercise of breaking down dichotomies and replacing them with nuances, there is a real need for such accounts (Bowker and Star, 1999; Timmermans and Berg, 1997). Information infrastructures like the one discussed in our case, that are to support globally dispersed, highly interdependent work, have to balance the two arguments. It would simply not be possible without it. Our analysis explore questions like: what are the consequences of emphasizing too strongly the unique, local and contextual solutions or a too uniform solution; how is the boundary between the global and the local drawn and maintained; what are the "costs", and for whom, of adopting global solutions; what are the implications for control and management of such efforts; if the global solution is not perceived as an iron cage against which local adoption strategies are waged, how should one describe and conceptualize local use of information infrastructure?

Empirically, we examine the challenges concerning the design and implementation of large-scale infrastructural information systems for heterogeneous environments. We draw upon material from an ongoing case study of a Scandinavian based – but globally operating maritime classification company that we dub MCC. In order to manage and cultivate its operations world-wide, MCC is faced with the challenge of balancing the need to streamline and standardize its operations across its sites against the well-known need to tailor information systems to local needs. MCC’s need to find a workable balance is acute. This is because their key asset and core competence is their ability to deliver high-quality surveys of ships world-wide. As ships are mobile, this entails that the surveys MCC conduct need to be distributed. Given the increased pressure in international shipping for cutting back on slack and improving efficiency, it is prohibitive for ships to spend extra time in ports "merely" for surveys. For MCC, this implies that the survey work for one ship may start in a port in one country with one surveyor, continue to the next port for the next surveyor to pick up where the first one left off before finishing the survey in a third port in a third country with a third surveyor. MCC's customers include ship yards, manufacturers, ship owners and National authorities. It is essential that the customers perceive this as one survey; that MCC’s services, not only their technology, is standardized (Leidner, 1993). For this to be attainable, the level of standardization of the surveyors’ work tasks has to increase significantly.

MCC has since 1999 been implementing a global information system to support surveyors’ work. The "Surveyor Support System", which has been regarded as fairly successful, has gained momentum and is currently used throughout 130 different offices world-wide. Prior to the implementation of this system, MCC’s entire information infrastructure for reporting surveys was primarily based on paper-based reports. With this paper-based infrastructure it was prohibitive to support a distributed survey process due to the lag in updated information. Consequently, the Surveyor Support System enables a completely new, distributed way of conducting survey work but is currently struggling to transform existing work practices that do not use the system.

The remainder of this paper is organized as follows. Section 2 elaborates our theoretical framework. We review the arguments and motivations for universal, standardized solutions before turning to the empirical evidence and analytic arguments for the situated, local design of any information system. In section 3, our research design is described and discussed. Section 4 presents the backdrop for our case by providing an overview of the organization of MCC, historical material, the IS project and a description of the surveyors’ work. Section 5 provides empirical illustrations of surveyors’ work and trade-offs between local and global concerns. In section 6, we analyze further the costs associated with working standards. Section 7 offers a few concluding remarks were we sum up some implications for large-scale, infrastructural information systems development and use in general. This has implications also for the "costs" – i.e. amount of improvisations and additional work – involved in transferring information systems from a context in the developed world to one in developing countries.

- Why universal solutions?

- The argument for situated, contextual design - once again

- The pragmatic turn: making work explicit

The traditional motivation for uniform solutions are derived from an interest in rationalization inspired by Fordist ideals of production (Yates, 1989). Hence, this motivation is grounded in fairly general principles and practices of production. Despite this, it exercises an influential role in portraying it as "obvious" that standardized solutions are beneficial (Hanseth and Monteiro, 1997; Monteiro and Hepsø, 2000; Williams, 1997). Standards, however, are never neutral as emphasized in studies of transferring (Western) standard solutions to developing countries (Hanna, Guy and Arnold, 1995; Sahay, 1998). These ideals, principles and practices of standardization so influential in production of goods, have since been employed also to service work of the kind MCC’s surveyors conduct. A beautiful demonstration of standardized service work is the ethnographic study of Leidner (1993) where she looks at one extreme case (McDonalds) as well as an insurance company. Hence, also for service work, standardization is perceived as a means for rationalization. Additionally, the development and use of comprehensive, interconnected and integrated modules of information systems – in short, information infrastructure – is driven by an ambition to extend the operations of the organization across many geographical locations. A key issue in realizing this ambition is to find a way to enforce some notion of control and coherence across the different contexts. As programmatically stipulated by Mintzberg (1983) and demonstrated historically by Yates (1989), one strategy to coordinate and organize geographically dispersed work is through standardization. Standardization enable coordination which in turn enable the exercise of control over distance (Law, 1986). This is typically aligned with the interests of management (Ciborra, 2000) or developed countries (Sahay, 1998). A ruthlessly single-minded, compelling, but ultimately flawed (Kling, 1991), elaboration of this is the argument by Beniger (1986) where organizations constantly experience crises, that is, situations beyond their control. In response, solutions for coordination and standardization are devised which resolve the crises. But the newly gained sense of control does not prevail for long as it subsequently functions as a platform for new operations – which then lead to a new "control crises" and so forth. From this point of view, the current challenges facing internationally oriented organizations with regards to extending and standardizing their operations world-wide is but a special form of a "control crises" and the development of information infrastructure an attempted solution (which, following Beniger, eventually will lead to a subsequent "control crises", see Ciborra (2000)).

There is an extensive body of both empirical and analytical arguments that emphasize local variation, contextual design, and design for situated action (see e.g. Greenbaum and Kyng, 1991; Kyng and Mathiassen, 1997; Suchman, 1987; Williams, 1997). For any given information system to work, the argument goes, it has to be tailored according to the requirements of the local context of use. And, as such, a local context will necessarily be unique, constituted by locally produced and institutionalized practices and the existing infrastructural resources.

There exist a substantial body of empirical studies that support this argument for local adoption to local contexts. For instance, Tricker (1999) describes the implementation of a state-wide EDI network in Hong Kong and Singapore, that has very different outcomes due to the cultural differences between the two countries. Ives and Jarvenpaa (1991) state that one of the main problems is the determination of global versus local requirements and the political and legal issues of local ownership of data. In addition, they point at the problems with outdated or unreliable communications systems in developing countries.

This general argument for situated design, by now a well established and largely accepted position among information systems scholars, has been demonstrated particularly vivid in relation to the diffusion or transfer of technology from developed to lesser developed countries (Braa, Monteiro and Reinert, 1995; Hanna, Guy, and Arnold, 1995; Lind, 1991; Ryckeghem, 1998; Sahay, 1998). The inscribed assumptions about local conditions, organizational hierarchies and work relations clash with the context of the developing countries. For instance, Sahay (1998) demonstrates how assumptions about the degree of familiarity with maps as well as the strength of central, governmental control inscribed in Western GIS efforts failed to translate gracefully to an Indian context.

The problem, however, with this argument for situated, local context is how to account for the instances of information systems that actually do cut across contexts. Taken literally, the argument for situated action has little or nothing to offer in terms of providing an explanation for why infrastructure technology actually works. What is called for, then, is an approach, a vocabulary and concepts that help us balance between a (literal) situated action argument while curbing a naive belief in uniform solutions (Bowker and Star, 1999; Hanseth, Monteiro and Hatling, 1996).

The argument for situated design, when exaggerated, takes on a rather dogmatic flavor. Detailed, situated accounts - in the absence of additional remarks - effectively function as a way of formatting the problem so as not to pose the question about similarities across contexts. Further, the balance between local variation and standardization often get misconstrued as an issue of control: the local variation is seen as a way to regain control, to work against or "around" the top-down and enforced structuring of information systems (Gasser, 1986; Kyng and Mathiassen, 1997). In this way, local variation is portrayed as a response to the intrinsic limitations of standardization. Hence, the standardized categories are portrayed as an iron cage, an enforced structure that is to be opposed. On this account, standardization presupposes docile elements whereas local variation signals regained control. This, we argue, is a serious misconception. It is rather the case, as Timmermans and Berg (1997: p. 291.) argue, that the dichotomy is illusory in the sense that the local variation, "work arounds" or tinkering are necessarily required – they are not merely the compensation for inaccurate design:

"This tinkering with the [standardized] protocol, however, is not an empirical fact showing the limits of standardization in practice. We do not point at these instances in order to demonstrate the ‘resistance’ of actors to domination. Rather, we argue that the ongoing subordination and (re)articulation of the [standardized] protocol to meet the primary goals of the actors involved is a sine qua non for the functioning of the [standardized] protocol in the first place."

In other words, it is not, as one might easily be led to believe through the situated design argument, a particularly fruitful position to be hostile to universal standards. It is rather the case that the real issues circle around questions like: how does standards come about; how do the negotiations unfold; who has to fill in the glitches to make standards work; where should the balance between the global and the local be drawn. Our pragmatic balance, then, amounts to making explicit the work or "costs" associated with establishing working infrastructures, a task that is notoriously difficult to identify as it tends to become invisible (Bowker and Star, 1999; Monteiro, 1998; O’Connell, 1993; Timmermans and Berg, 1997).

This is inspired by Star and Bowker (1999, p. 108), where they note that "true universality is necessarily always out of reach". Still, like all navigating devices, the ongoing strive for completion is productive. A splendid example is the prolonged study of classification that has been conducted by Bowker and Star (1999). The International Classification of Diseases (ICD) is an arch-typical illustration of how to balance between local and global needs. The ICD is a one hundred years old, centrally administrated list by the World Health Organization (WHO) aimed at mapping diseases that threaten public health. It is used by general practitioners, hospitals, insurance companies, statisticians, governments and others world-wide. It implements WHO’s efforts of categorizing causes of death for statistical and clinical purposes. In practice, however, it has proven nearly impossible to fully standardize the ICD due to local work practices, cultural differences and diverging requirements and interests. Bowker and Star (ibid., 1999) demonstrate convincingly how the aim of a global solution unfolds as an ongoing negotiation process around what shall be recorded, the level of details, the purpose and for who’s benefits and costs. Still, it makes perfectly good, pragmatic sense to hold that ICD "works".

Despite our affinity with (Bowker and Star, 1999), there are two aspects in our study that deviate from theirs. Their emphasis is on spelling out the inscribed interests and agendas of the different classifications. There is little attention to the actual, everyday use of these classifications, the local improvisations. In this respect, we come closer to the more process oriented accounts of Timmermans and Berg (1997). Hence, as in our case, the emphasis is on how standardized solutions and local resources are molded and meshed fluently in the ongoing use of the system. In addition, the standardization of survey work in MCC is highly sensitive to a dilemma that is intrinsic in much service work. Although standardization is perceived as a viable strategy for rationalization also for service work, this has a strong unintended consequence that needs to be kept invisible to the customers of that service: standardized service is typically equated with low quality. As pointed out by Leidner (1993, p. 30):

"[U]niformity of output, a major goal of routinization, seems to be a poor strategy for maintaining quality...since customers often perceive rigid uniformity as incompatible with quality"

This dilemma presents an additional element in the negotiation of where to draw the line between universal solutions and situated, local variations in MCC. This line is also subject to the perceived quality of the service that MCC delivers through their surveys.

The empirical evidence presented in this paper draws from a longitudinal case study of a global organization called MCC. It is interpretative in nature (Klein and Myers, 1999), aimed at "producing an understanding of the context of the information system, and the process whereby the information system influences and is influenced by the context" (Walsham, 1993, pp. 4-5). In addition, our approach to IS research is inspired by Actor-Network Theory which focuses on tracing different actors, translations of interests, and inscriptions of interests and intentions in artifacts (Berg, 1999; Law, 1986; Monteiro, 2000; Timmermans and Berg, 1997).

Prior to the study, one of the researchers had worked as a consultant on the software project for 6 months in 1997. The case study was conducted by one of the researchers from 1998 to 2001, and included in-context interviews and observations on four different sites (coded A, B, C, and HQ) located in two different Scandinavian countries. This, of course, limits the possibility for making generalized assumptions about how local transformations carry over to locations where the cultural and institutional characteristics differ substantially from a Scandinavian setting. However, it was not the aim to give a complete description or a comprehensive evaluation of MCC’s entire information infrastructure. In this paper, we focus on the ongoing transformations of local work practices in relation to the standardized information system that we have called the "Surveyor Support System". Still, all "global" phenomena need to traced to local expressions; the local feeds into the "global". Hence, "global" accounts are necessarily local. The fact that set of local sites are highly restricted and confined has been attempted compensated by supplementing this with indirect evidence. As background material, we have used MCC’s own evaluation of the system at other sites (Kuala Lumpur, Singapore, Dubai and Rotterdam) as well as discussions with surveyors returning from these sites.

Over 50 semi-structured and in-depth interviews lasting from 1 to 3 hours have been conducted of a total of 38 informants during 1998 – 2001 (see table 1).

|

Type of informant |

Number of informants |

Coding |

|

Surveyors in office A |

6 |

Surveyor #1 - #6, A |

|

Surveyors in office B |

6 |

Surveyor #1 - #6, B |

|

Engineers/Support personnel at HQ |

5 |

Engineer #1 - #5 |

|

Business managers at HQ |

10 |

Manager #1 - #10 |

|

Managers in the software development project |

5 |

Software manager #1 - #5 |

|

Senior software developers |

2 |

Senior developer #1 - #2 |

|

Superusers |

2 |

Superuser #1 - #2 |

|

District managers |

2 |

District manager #1 - #2 |

Table 1: Overview of interviews and informants

The key informants, e.g. managers in the software development project and business managers, have been interviewed up to 3 times. Most of the interviews were conducted in-context. The interviews of the managers were held in the managers’ own offices and in the case of the surveyors most of the interviews were conducted while they were working with the Surveyor Support System. In office B, three surveyors (#1, #2, #3) were followed over four days through the entire work process of reporting a survey job. One of the researchers spent approximately 2-3 days a week at the HQ from March 2000 to August 2000. During the process of data collection, findings and design alternatives were actively discussed with managers and software developers. More formal feedback was also given through seminars and academic papers. This process helped to uncover misunderstandings and challenged the researchers’ views on particular issues and problems. This also resulted in a greater awareness of different actors’ opposing opinions and views, thus illustrating the principles of multiple interpretations and suspicion by Klein and Myers (1999).

Based on discussions with managers and developers interesting examples of what Anthony Giddens refers to as the "double hermeneutics" occurred. Some of the managers in MCC started to actively use some of the researcher’s own vocabulary. For example the concept of "work arounds" as defined by Gasser (1986), became a well-known concept used to describe problems related to use of the Surveyor Support System.

- MCC and the business of surveying

- The "Surveyor Support System" and the guiding visions

The MCC is a Norwegian based but globally operating company 300 offices in over 100 countries. It has over 5500 employees organized in a divisional hierarchy with one division represented in each region of the world. Northern Europe, especially the Nordic countries, is a major cluster. MCC is a company with an established tradition and pride after 135 years in business. A core business area is the classification of various types of ships conducted by 1500 surveyors located in 300 different ports world-wide. This classification involves assessing the condition of the ship and is a prerequisite in connection with issues of insurance. Classification is conducted according to the international regulations provided by the International Maritime Organization (IMO) as well as MCC’s own classification rules. The international business of classification is highly globalized. It is under an increasing pressure marked by world-wide competition and structural changes due to mergers and acquisitions.

Many ships operate globally and want to spend a minimum of time at dock. A so-called annual survey has to be done within a "time window" of 3 months, otherwise the ship owner risks loosing his certificate. Thus, it is critical for MCC’s customers that they can provide identical services wherever the ship is located within the given "time window". Consequently, standardizing services globally and providing transparent access to information regarding ships and surveys are essential to offer flexibility and quality to the customer.

The engineers in MCC, often called surveyors, conduct annual surveys of ships operating world-wide. During an annual survey, miscellaneous parts of the ship including the hull, machinery, rudder, electronic equipment, emergency procedures and safety systems are inspected. This is to ensure compliance with classification rules and international regulations, and in some cases, particular national regulations.

Most activities in MCC included some manipulation, use or production of paper-based documents such as survey reports (surveys, for short), checklists, drawings, type approval certificates, renewal lists for certificates and approval letters. Traditionally, the surveys have been produced on paper. The surveyors had established a system of 74 (!) different paper-based checklists for supporting different types of surveys.

It is a guideline consisting of different items that you should go through – but you will have to look at other things too (Surveyor #3, A)

The checklists were tailored according to the different contexts and environments. Thus, there was no standard representation or common use of terminology, and these checklists had not been a part of the official documentation given to the customers. This proliferation of non-standardized, paper-based checklists was perceived as hampering the quality and efficiency of MCC’s global operations. It was a key motivation underpinning the design of the Survey Support System that has been introduced.

MCC has during the last few years invested heavily in various IT systems as well as strategic research concerned with how to adopt state-of-the-art information technologies related to MCCs products and services. The strong commitment and emphasis on IT based solutions was backed by top management as symbolically gestured when the CEO announced 1997 "The year of IT". As a consequence, MCC implemented a common IT infrastructure for all 300 stations around the world in 1997. This standardized infrastructure included a physical wide-area network based on NT servers and TCP/IP and standardized configurations for PCs with Microsoft Office’97. This IT infrastructure was expected to provide all employees in MCC with transparent access to documents, drawings, certificates and all information regarding classification.

This IT infrastructure was a prerequisite for implementing a common information system (from now on called the Surveyor Support System) for enabling a distributed classification process based on state-of-the-art software technologies and a product and process model. In short, the Surveyor Support System is a state-of-the-art client/server system built on Microsoft’s COM architecture as middleware and a common SQL-based database server. The system was intended to run at the largest offices by early 1998 but was delayed one year due to continuous adjustments.

The "Surveyor Support System" is MCCs largest software development project to date, and goes back to the early 1990s when the IT department undertook several pre-projects aimed at developing various calculation packages for surveyors. A, arguably the, key element in these efforts was a so-called product model. It was intended to provide a standardized way of describing the various modules of a vessel, inspired by similar efforts in the manufacturing of airplanes and cars. In essence, product models enable exchanging, sharing and storing product data within one and among several organizations. MCC’s efforts to spell out a product model were aligned with ongoing efforts by the International Standardization Organization (ISO). Frustrated by ISO’s lack of progress, i.e. its tendency to produce idealized models rather than working solutions (see e.g. Hanseth and Monteiro, 1996; Williams, 1997), MCC abandoned this strategy. However, even if MCC’s focus and means shifted, the aim of uniform product model to support standardized work processes prevailed. The management of MCC perceived strict standardization as the only viable strategy for streamlining global survey work:

I think the solution is to make it [the Surveyor Support System] as generic as possible – there is just no other way to do it – if we really want a system to be used world-wide (Engineer #5)

While managers tended to focus on the organizational and business related issues as reasons for standardization, software developers emphasized the necessity for standardizing on concepts, terminology and a common world-view across departments and user groups for technical reasons:

[It] was part of the vision to have a product model because we had to develop various applications, which will all be using the same data within the same domain – it’s utterly logical that they should speak the same language. Like TCP/IP is the standard on the Internet, the product model is the standard for the domain knowledge of MCC (Senior developer #1)

For sure, management were aware that the Survey Support System would challenge different communities’ entrenched practices and interests (Braa and Rolland, 2000), organizational politics was acknowledged and expected up-front as a barrier:

Our business is 135 years old and we have long traditions – a ‘stiffener’ is not a ‘stiffener’ among different groups and departments… Thus, some political problems will come to the surface. If we want a product model as a foundation, the prerequisite is to speak the same language. (Software manager #5)

Still, the extent and details of how the institutionalized practices, technologies and terminology did differ was only gradually and painfully grasped.

In early 2001, MCC has succeeded in transforming their global work processes from being paper-based to becoming increasingly dependent on the Surveyor Support System in the sense of deployment and increasing use of the system. The system has also distributed different work tasks that earlier were done at the HQ, and in addition it is argued that the system has led to a higher degree of uniformity in the work tasks carried out by the surveyors. In this way, MCC considers the Surveyor Support System to have provided the organization with many benefits.

- Dealing with irrelevant issues and categories

- Adding new categories in situ

- ‘Invisible’ work tasks inherently linked

- Inscribed sequential logic

The checklists and step-by-step work procedures in the Surveyor Support System are designed to be universally applicable. For particular surveys however, this level of standardization has certain "costs" for the surveyors. Regardless of their relevance to the survey job at hand, all details (i.e. items in the checklists) and categories (i.e. checklists) are exposed to the user. This implies extra work as the surveyors have to explicitly fill in data and categorize a range of items that are irrelevant to the given survey. For any given survey, the surveyor accordingly deals with the full-blown complexity of every type of and issue around surveying.

The reason why this generates additional work is that the information that needs to be provided is compressive. In the paper-based version of the checklists, they often exceeded 90 pages. It is a time-consuming task to explicitly categorize all items as either "Found in order", "Found not in order", "Repaired/Rectified", "Not applicable", or "Not inspected". Hence, here the "costs" are the additional work tasks that has to be carried by the surveyors that did not exist with the paper-based system:

In the old days we used to walk around with paper-based checklists – and only a few of these lists were sent to HQ. With the Surveyor Support System we get a lot of additional work tasks because we have to explicitly write something on every item in the checklists. It’s quite evident that this is not an advantage for the surveyor (Surveyor #2, B)

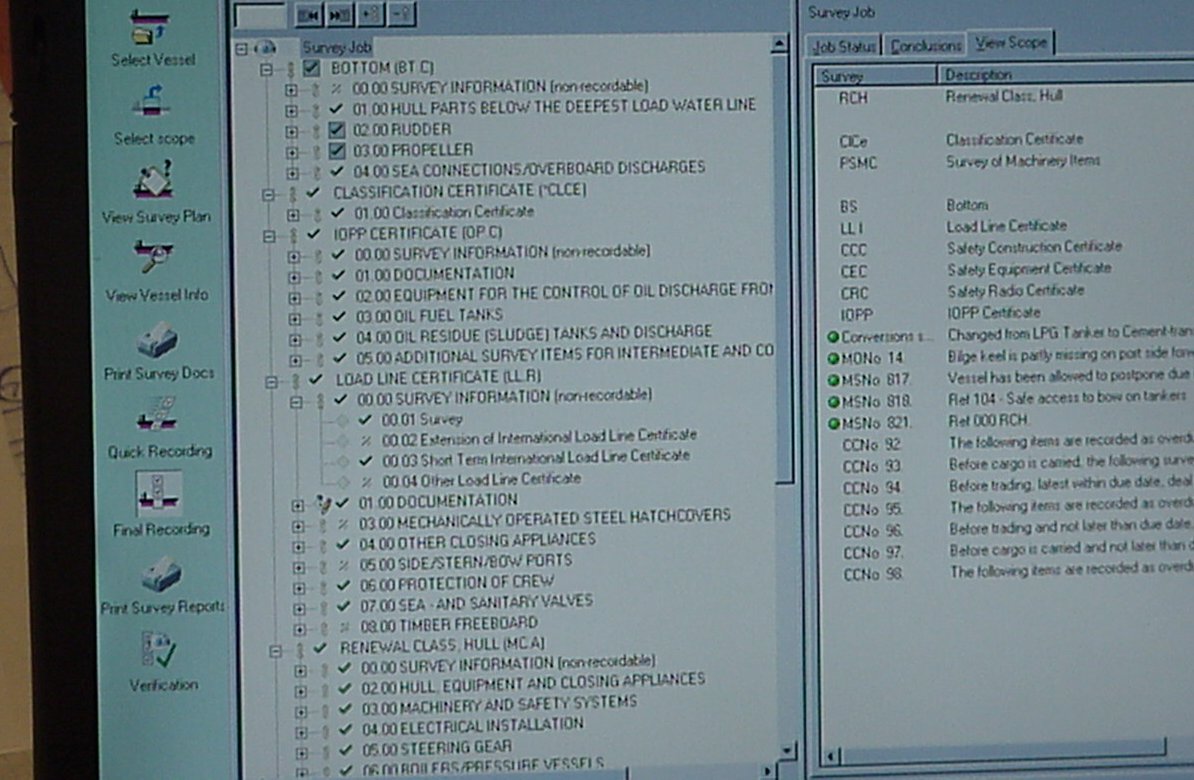

Picture 1: Screen showing checklists for a typical survey job having all the items aggregated. Work tasks to be preformed by the surveyor are shown to the left. To the right memos and failures are listed.

To illustrate, consider the following scenario where the surveyor is using the system when reporting a survey that includes the rudder of the ship. The surveyor has just returned to his office after finishing inspecting, and is using the Surveyor Support System to report The problem he is facing is how to categorize those items that are irrelevant to his survey. Are they "Not applicable" or "Not inspected" he ponders:

In this system, it’s quite hard because you cannot just say "No". Consider this one [pointing at the screen] called "Propeller nozzles and/or tunnels". This vessel does not have one so I’ve selected "Not Applicable". But it could just as well been "Not inspected". […]. This can be very tricky. Our procedures do not specify that we have to take the rudder down, which means that this "02.09 Dismantling of Rudder" is not a requirement. This is only supposed to be done if I find something that indicates that I need to. There [pointing to the middle of picture 1] you can write "Not applicable", but then the following items become irrelevant as you simply can’t see the rudder stocks when the rudder is not dismantled. Here [pointing] it makes sense to say "Not inspected". This is also true for "02.11 Rudder shaft or pintles and bearings" and "02.12 Max. bearing clearances after repair". Since the rudder is not down, it is impossible to inspect these things. And "Max. bearing clearances" gives absolutely no meaning here – these measures are only relevant when the vessel has been repaired. And if so, of course I would have categorized it as "Repaired/Rectified". (Surveyor #3, B)

The Surveyor Support System actually supports reporting a survey that includes the rudder, because it provides a checklist where the user can fill in collected information concerning the rudder (See "02.00 Rudder", picture 1). However, what, how, and where the collected information regarding the rudder is to be filled in, is pre-defined and inscribed into the electronic checklists. In the recording of this survey job, the surveyor is simultaneously and intrinsically exposed to every detail concerning a rudder – which of course all are important on a general level – but rarely relevant in one specific context and situation. For instance, in this particular case it makes no sense to report measurements related to the dismantling or repair of the rudder.

In a different case, a surveyor was working with what was considered a fairly straightforward survey job. The surveyor had just arrived back at his office after completing an "annual survey" of a ship. Before he went onboard, however, he had to use the Surveyor Support System to select the scope for the survey, to get an order number from a secretary to fill out the order form, and printed out the checklists and some other documents. Since the "intermediate survey" for this particular ship was due shortly, the Surveyor Support System automatically included checklists both for the "annual" and the "intermediate" survey. An "intermediate survey" covers the same as an "annual" but with some additional details. It is to be conducted during the second or third year following the "initial survey". The surveyor explains that the problem is "that these checklists are nearly identical – you’ve to enter the same information twice"(Surveyor #2, B), and the surveyor is unable to delete or omit one of the checklists:

You don’t know whether they want to take the intermediate survey now along with the annual – or if they want to wait until next year. You only discover if it’s possible to do the intermediate survey when you get out there. (Surveyor #2, B)

Hence, since there is no way of knowing if it is possible to do only the annual survey or whether there is time to do the slightly more complicated intermediate survey, the Surveyor Support System always includes support for both cases leaving the surveyor with the additional work tasks of filling out both checklists.

There are cases where improvisation beyond the predefined categories and work tasks is absolutely mandatory for being able to report a specific survey. One of the surveyors was faced with the problem of reporting failures that are directly linked to the fact that the vessel has been re-built:

These failures given here are directly linked to the fact that this used to be a gas tanker. It’s gas codes… […] But now it’s not a gas tanker but a normal cargo ship. So now I have to contact HQ and tell them that this ship has been re-built. (Surveyor #3, B)

But this does not necessarily solve the problem for his fellow surveyor, the next one to survey the vessel. He is concerned about how to make sure that his colleague is made aware of the conversion. Without this essential cue, the failure report is utterly incomprehensible. As a result, he improvises and creates an additional, non-standard survey which he calls a "conversion survey".

Here I had to create a totally new survey which I called a ‘Conversion survey’ – it’s really only a free-text field [shows how this is done]…[…] It had some memos attached, which is not needed anymore because it’s not a gas tanker, and this certificate will also be withdrawn [points on the screen]. But I have to recreate the memo to signal the need for the periodical survey within May 99 (Surveyor #3, B)

In this way, the surveyor added a new category of surveys called a "Conversion survey" consisting of just one checklist with one text field, where the failures he wanted to report was specified. Furthermore, by attaching a memo to this new survey the surveyor passes on the knowledge concerning the conversion of the ship to the succeeding surveyor, and to HQ, because after the conversion forthcoming surveys have to be re-scheduled.

The Surveyor Support System does not, contrary to the intentions and ambitions cover all aspects of the surveyors’ work. To use the system effectively, a number of supplementary sources of information is used such as memos, reports and technical data. This tends to perform work that is neither visible, supported nor specified in work procedures. This kind of work is essentially invisible – but at the same time necessary. For instance, in a particular case a surveyor found the following memo attached to his survey job:

Memo to surveyor: 805 1999-03-08.

Sister vessel suffered crack problems in bracketed connections between floor flange and tank top plating in ballast wing tanks. Repair proposal available.In this particular survey, the surveyor saw it as highly relevant to get hold of the "Repair proposal", which had been prepared by a previous surveyor. However, since the "Repair proposal" was located at HQ this implied additional work tasks to be done – including using technologies like phones, fax machines, and e-mail – not part of or integrated with the Surveyor Support System (see picture 2). Furthermore, apart from the additional technologies, the surveyor emphasized that he had to find someone "who is willing" to dig out the report form the archive at HQ, one of the largest paper-based archives in Norway. Hence, in this way, the surveyor also must rely on his informal contacts outside the local office:

Normally, if you think the information in the MS [Memo to Surveyor] is relevant – you’ve to try to find it. But you’ve got to call or fax someone – and you’ve got to find a person at HQ who is willing to get the information in the ship’s paper file and look up the correct reference and find the repair proposal. […] and I don’t know whether this can be e-mailed. Things like this you can’t get from the system. If it was possible to get access to the report with the reference 850 1999-03-08 in the system – it would have saved you a hell of a lot of time. (Surveyor #1, B)

The surveyors have to depend on a range of heterogeneous technologies and social relations not formalized or a priori given by the standard in terms of work procedures and the Surveyor Support System. To improve this situation, however, MCC has started a project for scanning paper-based reports and making them available electronically through hyper-links in the Surveyor Support System.

Picture 2: Surveyor in the office: the ‘invisible’ work that makes the standard work.

The Surveyor Support System does not only provide predefined categories like types of surveys and checklist items; it also inscribes a certain sequence of working. This is partly due to technical issues, that different software components need to be updated, and partly because the Surveyor Support System is intentionally designed to support a predefined work process. This work process consists of 9 ordered tasks: "Select vessel", "Select scope", "View survey plan", "View vessel information", "Print survey documents", "Quick recording", "Final recording", "Print survey report", and "Verification".

In some situations, this linear logic enforced by the system does not always fit very well with the surveyors’ needs for having a conception of the whole (i.e. the overall structure of the report) while they are entering data concerning a detail somewhere in the hierarchical structure of a checklist:

I‘m always forced to enter information on the lowest and most detailed level. This is extremely time-consuming – and the work becomes very fragmented […] it’s chaotic, [and] I miss the ability to have a view of the whole while I‘m working on a specific detail. In many cases I realize that I’ve to fill in a blank line – and I click back and forth to ensure that the report gets a proper outline (Surveyor #1, B)

Thus, the surveyors constantly have to "swap" between different screens to get a sense of how a small detail impacts the structure of the overall outline of the final report. Furthermore, in some cases when one task has been performed and submitted, the user is not allowed to undo it later, a rather strong inscription for ensuring that the tasks are preformed in the correct order. From the point of view of the surveyors, this predefined order of tasks is not always appropriate:

[All] memos and failures have to be reported using the Quick report. That means that all the text to be included in the Final report is also generated in the Quick report. But there is no way of going back…[…] When you first have submitted the Quick report with memos and failures – you cannot do this differently in the Final report. […] This is a dilemma, [as] you just cannot report a failure when some of the data is lacking […] (Surveyor #2, B)

Moreover, this inscription has some problematic unintended consequences:

[In] these situations, we postpone submitting the Quick report. But in many cases I try to finish the Quick report anyway – because I just send the information missing in a separate report through e-mail some days later. (Surveyor #2, B)

According to the defined work procedures, however, the surveyors are instructed to submit the Quick report within the following working day, and thus simply cannot wait to submit even if they are very well aware that some data is missing. The unintended consequence of this is that some of the data is archived locally in local databases or paper-based records. Subsequently, this fragmentation – which precisely was the aim to eradicate - is re-introduced. This also implies additional work for the next surveyor in line as they may have to contact a previous surveyor in order to get the information they need:

Many times I’ve postponed my Quick reporting, because I think "I just wait another day". I call them up and ask for the information I need. But, in fifty percent of the cases – I’ve to tell them that I cannot wait – and then I continue to do my [Quick] reporting anyway. I know that I’m missing some of the details – but I don’t want to waste time, so that information is eventually archived here locally. So, if anybody wants more information – they have to contact me. But, I don’t feel that this is optimal way of working… (Surveyor #6, B)

In the later re-designs of the Surveyor Support System, however, the problem around the "Quick reports" was solved by making it possible to make corrections in the final report.

- Acquiring universalism

- "Costs" – but for whom?

- Communities-of-surveying

- Quality – for whom?

It is tempting – but ultimately neither too difficult nor novel – to merely identify the numerous ways in which large-scale IS efforts like that in MCC fail to fit with the many situated, local contexts (e.g. Kyng and Mathiassen, 1997; Suchman, 1987). There is simply no way a single solution, serving 300 ports in 100 countries world-wide, can "fit" equally well. The real issue, we argue, is to analyze how global, never-perfect solutions are molded, negotiated and transformed over time into workable solutions. Hence, we aim at articulating some of the work and costs associated with the process of making solutions acquire universalism (Bowker and Star, 1999; Monteiro, 2000; O’Connell, 1993; Timmermans and Berg, 1997). The many ways in which "universal" solutions from developed countries fail to transfer smoothly to developing countries, illustrate the amount of additional work that is necessary (Braa and Monteiro, 1995; Hanna, Guy and Arnold, 1995; Sahay, 1998).

This perspective where universalism is a produced effect implies a critique of much of the work within CSCW. As pointed out by Berg (1999), inquiring whether (or not) a given solution "fits" a situated context fails to due justice to the dynamics and the transformations that make up the molding and negotiation process. A solution does not "support" situated work, it transforms it. The key issue, then, is to articulate and make explicit the costs and benefits in these transformations that go into producing "universal" solutions. Only when can judgement be passed on whether the distribution of these is reasonable.

As described above, universialism was initially attempted through a fully integrated, standardized and complete product model. However, already during design, ambiguity and uncertainty had to be negotiated. In addition, negotiations and improvisations involved in categorizing checklist items, using memos, and adding new survey types are an inherent and necessary effect of the standard. Thus, pragmatic tradeoffs and situational actions are taken all the way throughout the Surveyor Support System’s life cycle. This illustrates how the process of acquiring universality is an ongoing process. It takes work to produce universality (O’Connell, 1993; Timmermans and Berg, 1997). Categories are not naturally given and established once and for all (Bowker and Star, 1999). Additionally, the actual use of these categories are essential for their working in the first place. For instance, information provided by the Surveyor Support System through the memos is not always useful without the additional documents that are referenced in the memo. As illustrated above, many supplementary work tasks have to be carried out in order to get the "Repair proposal". These work tasks are neither predefined nor part of the standard. Rather, they depend on the surveyors’ social relations and his ability to use other infrastructural technologies. Instead of thinking of – and aiming for – the system to cover all aspects of surveying, it should rather be recognized as but one element in a working infrastructure. Documents can for example be made available through MCC’s Intranet or a document database, which has been done in similar cases. The point is, adding more "functionality" and integrating other information-based resources will not ultimately establish universality (Ellingsen and Monteiro, 2001). Because additional functionality and resources also have ‘invisible’ work inherently linked, and categories will be created through actions taken in situ. In this respect, some degree of "fragmentation" and heterogeneity is not merely the evidence of a failed standard (Timmermans and Berg, 1997). The Surveyor Support System is actually used to produce reports, surveyors coordinate their work through it, and add information to a common database. This implies, in a pragmatical sense, that it "works".

In the case of MCC, standardization is necessary for making sure that the succeeding surveyors can continue working on a survey and make sense of information reported in previous surveys. Without this, there simply is no survey. The question, then, is whether MCC has been able to balance the various local needs with the need for an overarching standard, and to what "costs"? And for whom? In the following subsections, we take a look at some of these costs that are connected to individuals, communities, and to the organization as a whole.

In their discussion of ICD, Bowker and Star (1999) point out the dilemmas around how accurate and detailed information need to be presented. Transaction costs involved in collecting and managing information, they argue, tend to multiply with increased precision. This tends to create a tension between different interest groups as the need for different levels of detail and accuracy in different contexts. This problem is recursive, and therefore not automatically solvable by standardization.

As for the ICD, it makes sense to argue that the Surveyor Support System "works" in the way that reports are filed and ships are surveyed. As illustrated in section 5, this is largely due to the way the individual surveyors’ work has been transformed by performing additional work:

As it is now, we get a lot of extra work – with these checklists and entering all the data. (Surveyor #3, A)

As argued by Timmermans and Berg (1997), however, the surveyors do not perceive this as an iron cage that they are trapped in. They fairly fluently use and improvise workable routines. The way they postpone the final filing of the reports in order to make sure that all the details are included illustrates that they tend to "build-in" flexibility into the defined standard through their work. In this way, both their work practices and the Surveyor Support System get transformed (Berg, 1999). When it is particularly important that the report gets delivered at once, for instance if another surveyor is going on board the same vessel the following day, the report gets submitted and the information regarding the details are archived locally.

On the other hand, most managers conceive of this as a result of inappropriate design and irregularities in work procedures, and that this transformation undermines central coordination and control. As local, individual surveyors decide whether to submit or to wait, there is simply no way management can control this process. For management, this undermines the intention of the Surveyor Support System as a complete and integrated database as expressed by a manager in the implementation project as "we are constantly struggling with the fact that users don’t act as they have been told. "(Manager #4). Locally stored copies generate additional work and "costs" also for the surveyors as they have to track down and contact individuals at other offices to get the most recent information about a vessel. Accordingly management tried to add functionality to inscribe into the Surveyor Support System a standardized way of working to curb improvisations by surveyors:

We are adding a lot of functionality to the system – some work arounds disappear after doing these modifications – but new ones tend to turn up. It’s an ongoing battle. (Manager #4)

Hence, local improvisation – essential for the actual working of the system – was perceived as a threat to properly standardized surveying routines (Ciborra, 2000).

Prior to the implementation of the Surveyor Support System, work tasks related to coordinating a survey job was done through use of standardized paper forms more or less administrated centrally form the HQ. Consequently, implementation of the Surveyor Support System implied a distribution of these tasks:

We are distributing our work processes …we want to be as close as possible to the customer. The system [Surveyor Support System] represent a common database – thus it will not matter where the actual work is done …This implies that we have to standardize our way of working – and design new processes – but they have to be accepted by the entire organization. (Manager #9)

In the initial phases, the project was dominated by high expectations about the way coordination and a smooth flow of work would be ensured by and inscribed in the Surveyor Support System itself. This neglected the additional work the surveyors do to coordinate their work with their fellow surveyors. Rather than acknowledging and facilitating informal networks and knowledge sharing among the community of surveyors (Brown and Duguid, 1991; Wenger, 1998), the system attempts centralized control. This neglects the hybrid collectives of surveyors, free-text reports, locally stored information and faxes that constitute surveying in practice.

A fairly invisible costs associated with the standardization of survey work, are its implications on quality. MCC’s prospect lies in its ability to preserve its customers’ perception of high-quality service. The intention with the Surveyor Support System is precisely to deliver these services more effectively with increased, or at least the same quality. What was perceived as "high-quality services", however, varied significantly among the different actors. From the management’s point of view, standardized work procedures along with the Surveyor Support System were prerequisites for delivering high-quality services and hence standardization was seen as essential:

We wrote special instructions to cover our quality requirements in relation to the implementation. It was quite detailed, and we established a number of ‘best-practices’ where we described what we conceived as the best ways of doing things on a world-wide basis. And then after having discussed this, we distributed it – with the aim of getting as standardized solutions as possible also on a very detailed level. (Manager #6)

Similar, form a technical point of view, software developers argued that standardization become important for establishing one common data model, and also for streamlining the existing work processes:

Standardization of work processes is very important in the project, especially for the production systems like the Surveyor Support System. (Software manager, #1)

Clearly, for software developers to be able to deliver "high-quality" software standardizing work processes globally becomes important – so the Surveyor Support System would "fit" all offices equally. Hence, software developers have an inherent interest in standardizing work processes – since common work processes implied simpler requirements and design.

As emphasized by Leidner’s (1993, p. 30) study of service work, standardization is associated with low quality, a position closely aligned with the surveyors’ notions about quality. Hence, there is a real dilemma and tension in how quality is defined. In MCC, this dilemma gets played out through the surveyors’ modifications of the generated reports and by adding new categories of surveys. In fact, much of the extra work as described above is done to ensure high-quality reports. Thus, surveyors in the field often have a very different view of what it means to deliver a "high-quality service" to customers. To them, simply following the generic checklists and the standardized work tasks do not ensure "high-quality" – and hence, they were surprised that the Surveyor Support System "forced" them to use the checklists in a certain way:

You can’t just pick out item 2.1 on all vessels – and say OK here’s a problem, because the vessels are so different, systems are differently constructed, components are different – hence I don’t really see the advantages. I was very surprised when the system [the Surveyor Support System] was so focused on checklists. (Surveyor #3, B)

Through improvisations, surveyors devise clever ways of tailoring the system and the reports to meet their desired level of quality for different customers.

Arguing for a pragmatic approach to analyzing infrastructural information systems is neither heroic nor high-profiled. It amounts to tracing the costs and benefits, distribution and voices of the associated transformations to work out a reasonable balance. As pointed out already, what is "reasonable" for one need not be that for the next. Still, the articulation of what it takes for global solutions to acquire the quality of universalism is necessary (Timmermans and Berg, 1997). Only through these exercises in making the invisible costs and work (more) explicit can a more pragmatic balance be negotiated (Bowker and Star, 1999). That there is still a need for this is indicated by the difficulties of establishing large-scale, infrastructural information systems more in general both in developed and lesser developed countries (Ciborra, 2000; Monteiro, 1998; Sahay, 1998; Tricker, 1999; Williams, 1997).

Like most IT systems and infrastructures, the Surveyor Support System was designed in the Western part of the world and transferred to over 130 offices all around the world. Offices visited during our study differed culturally from other offices in for instance Singapore and Dubai. However, whether it is Singapore or Scandinavia, standardized technologies like the Surveyor Support System becomes transformed in a mutual shaping process with the local context. These dynamic processes may locally develop along different trajectories, but due to the distributed nature of survey work and the shared infrastructure – the different local shaping processes do not happen in isolation from each other. Thus, in designing and implementing a shared infrastructure like the Surveyor Support System, local needs must always be weighted in relation to a well functioning infrastructure which encompass different communities-of-practice, technologies, diverging interests and needs.

Design then, amounts to tracing the costs and benefits, distribution and voices of the associated transformations in order to work out a reasonable balance. It is quite evident that one cannot find one optimal solution (Bowker and Star, 1999), and that there will always be some costs related to establishing "universal" solutions. What is important then, is to illuminate exactly what these costs are, and their implications for different actors involved

More specifically, our study of the Surveyor Support System supports Bowker and Star’s (1999) point that it is unrealistic and counterproductive to try to destroy all uncertainty and ambiguity in such infrastructural tools. In summing up, the following three important implications for design can be drawn:

Firstly, the Surveyor Support System tended to impose a too detailed way of entering data into the system. Surveyors were exposed to a range of details irrelevant for a specific survey. Moreover, all items had to be categorized independently of their relevance to the particular problem at hand. This illustrates that the accuracy of information must be weighted against the costs of collecting, registering, managing, and reusing such information. A higher level of accuracy seems to decrease flexibility in surveyor’s work and increase complexity and uncontrollability on the managerial level. Henceforth, a lower degree of detail and accuracy required by the Surveyor Support System would make the system more flexible and probably enhance efficiency in use.

Secondly, which cases are considered "special cases" is important because they often require additional work to avoid misunderstandings, inform other surveyors, and to retrieve additional information. Henceforth, an a-priori given structure of information and order of work tasks designed for "normal cases" may curb alternative structures and order of work tasks needed for "special cases". In the MCC case, deviations from intended use did not primarily steam from lack of user training or lack of computer skills, but from the necessity of reporting special cases like the "conversion survey". Hence, the surveyors’ skills and knowledge on surveying and their commitment to perform additional work tasks are essential for preserving quality throughout the process. A redesign of the system made the surveyors able to use the system more freely, and this solved some problems. For instance, when the restrictions on modifying generated reports were removed, this lead to a significant reduction in average-complete times for survey reports because this increased the opportunity for reporting "special cases". This also illustrates that an infrastructural information system needs to support a flexible way of re-structuring information to establish what Bowker and Star (1999) denote as "boundary objects".

Thirdly, in designing systems like the Surveyor Support System it is impossible to cover all aspects. The Surveyor Support System fails to be an all-embracing perfect solution, but succeeds in being one element in a larger infrastructure when surveyors are allowed to improvise beyond the pre-given categories (Ellingsen and Monteiro, 2001). Thus, in designing systems like the Surveyor Support System not all information resources should be considered to become fully integrated in a common data model. For instance, it turned out to be more successful to simply provide links and pointers to information in the Surveyor Support System, than to include all available information in a common database.

It would be a serious misreading to infer from the points given above that the Surveyor Support System is a typical example of a failed IS project. On the contrary, it illustrates that MCC’s focus on continuously developing the system through pragmatic adjustments and re-designs have been successful, and that this process has been essential for striking a reasonable balance. The benefits with the Surveyor Support System is that it enables completely new work process and ensures that information is collected in a similar way across different sites. This is considered important for creating new services including special statistical overviews and reports that will give MCC's customers added value.

In this paper, we have illustrated some of the "costs" associated with use of the system in local contexts. Henceforth, our study does not give a complete description or evaluation of the Surveyor Support System at a more aggregated organizational level. The examples used to illustrate the costs inherent in use of large-scale IT systems and infrastructures are not necessarily representative for how the system "works" in other local contexts or after continuous development of the system. However, our study shows the dilemmas in balancing local and global requirements involved when designing large-scale IT systems and infrastructures.

The precondition for being able to balancing the costs in infrastructural information systems in the design process is to follow a "reflexive design process". This involves designing the system gradually through iterative processes, where the current design is continuously negotiated and costs on different organizational levels weighted. This implies ensuring flexibility through always – both technically and politically, keeping the possibilities open for re-designing the system. Spelling out the various types of "costs", i.e. additional work, is exactly what the improvisations that are involved in technology transfer from developed to developing countries.

Acknowledgement: We are grateful for feedback on an earlier version of this paper that was presented at the IFIP W.G. 9.4 conference in Cape Town in May 2000 aimed at IT in developing countries. We have also benefited from comments and discussions with Kristin Braa, Ole Hanseth, Lucy Suchman, and the involved managers at MCC. Sundeep Sahay has provided extensive advice as a Guest editor.